As organizations increasingly blend human expertise with artificial intelligence, measuring performance in hybrid workflows has become both more complex and more critical. Traditional productivity metrics are no longer sufficient when tasks are shared between people and algorithms. Leaders now require specialized tools that track efficiency, quality, collaboration, and accountability across integrated human-AI systems. Understanding which tools provide meaningful insights is essential for optimizing output while maintaining transparency and trust.

TLDR: Hybrid human-AI workflows require specialized performance tracking tools that measure both human input and algorithmic output. Modern platforms combine analytics dashboards, collaboration monitoring, model evaluation metrics, and workflow visualization to give organizations full visibility. Success depends on tracking accuracy, speed, usability, and alignment with business goals. Choosing the right tools ensures transparency, accountability, and continuous optimization in AI-augmented environments.

The Rise of Hybrid Human-AI Workflows

Hybrid workflows combine human decision-making with automated data processing and AI-driven recommendations. In marketing, for instance, AI systems may generate content drafts that humans refine. In healthcare, diagnostic AI tools assist doctors who make final decisions. In finance, algorithms detect anomalies while analysts interpret findings.

This blending of roles demands a new approach to performance monitoring. It is no longer enough to measure employee productivity alone. Organizations must also assess:

- Algorithm accuracy

- Time saved through automation

- Quality improvements or declines

- Collaboration efficiency

- Decision transparency and traceability

Performance tracking in hybrid environments sits at the intersection of human resource management, data analytics, and AI governance.

Core Categories of Performance Tracking Tools

1. Workflow Analytics Platforms

Workflow analytics platforms provide visibility into task progress, bottlenecks, and output metrics. These tools visualize how work moves between humans and AI systems.

Key features typically include:

- Task completion time tracking

- Automation rate measurement

- Bottleneck identification

- Process mapping dashboards

- Resource utilization reports

By mapping workflow paths, managers can determine whether AI tools accelerate operations or introduce friction. For example, if human review of AI output takes more time than manual creation, optimization may be required.

Advanced platforms often integrate with enterprise systems to provide real-time monitoring and cross-departmental reporting.

2. AI Model Performance Monitoring Tools

When algorithms contribute to decisions, their performance must be continuously evaluated. AI monitoring tools analyze:

- Accuracy rates

- Precision and recall metrics

- Bias detection

- Model drift over time

- Confidence scoring reliability

Model drift monitoring is especially crucial. As data patterns evolve, AI performance can degrade. Tools that track predictive consistency and alert teams to anomalies help maintain reliability.

Some monitoring systems also log decision explanations, enabling administrators to review why a system produced a particular output. This enhances accountability and regulatory compliance.

3. Human Productivity and Engagement Metrics

Hybrid workflows must still track human contributions. However, the metrics shift from output volume to value-added input. Instead of counting tasks completed, organizations evaluate:

- Quality of edits made to AI output

- Response time to AI suggestions

- Decision override frequency

- Creative enhancements beyond automated drafts

Employee engagement surveys also help determine whether AI tools empower workers or create frustration. Performance tracking without understanding user sentiment offers an incomplete picture.

4. Collaboration Intelligence Tools

Hybrid systems often require coordination between human teams and AI interfaces. Collaboration intelligence tools measure how effectively this coordination occurs.

These platforms analyze patterns such as:

- Communication frequency

- Revision loops between AI and humans

- Approval cycle times

- Feedback integration speed

By examining these metrics, organizations can identify training gaps or user interface issues affecting productivity.

Advanced Monitoring Capabilities

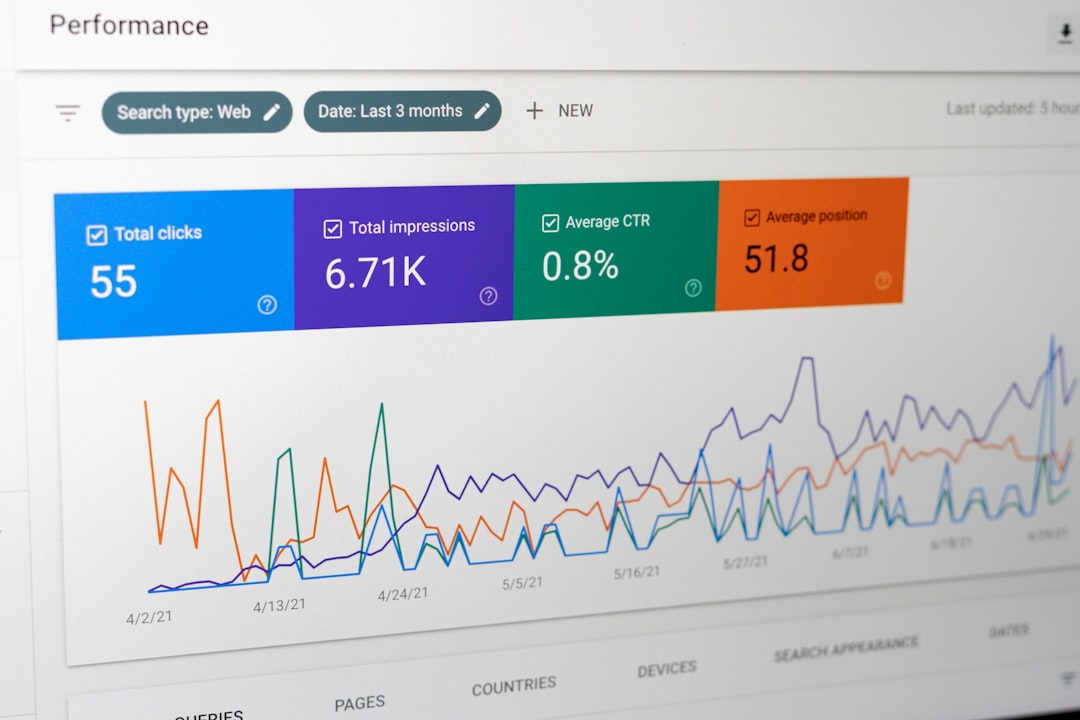

Real-Time Performance Dashboards

Modern hybrid tracking tools rely heavily on real-time dashboards. These dashboards blend multiple data streams into a unified view, allowing managers to see:

- Human task progress

- AI system status

- Error rates

- Approval bottlenecks

- Throughput volumes

Real-time monitoring prevents small inefficiencies from escalating. For example, if AI output suddenly requires increased manual correction, it may signal training data issues.

Explainability and Traceability Systems

Transparency in AI-driven workflows is essential, especially in regulated industries. Tools that provide explainable AI logs help organizations:

- Track which decisions involved automation

- Record human intervention points

- Audit decision rationales

- Ensure compliance reporting

This traceability contributes to ethical AI governance and strengthens stakeholder trust.

Cost and ROI Tracking Tools

Hybrid workflows are often introduced to improve efficiency or reduce costs. Performance tracking tools therefore include financial analytics modules that compare:

- Labor cost savings

- Time-to-delivery improvements

- Error reduction impact

- Scalability gains

Measuring return on investment ensures that AI adoption provides tangible business value rather than merely technological novelty.

Integration and Interoperability Considerations

No tool operates effectively in isolation. Hybrid performance tracking systems must integrate with:

- Project management software

- Customer relationship management platforms

- Human resource systems

- Data warehouses

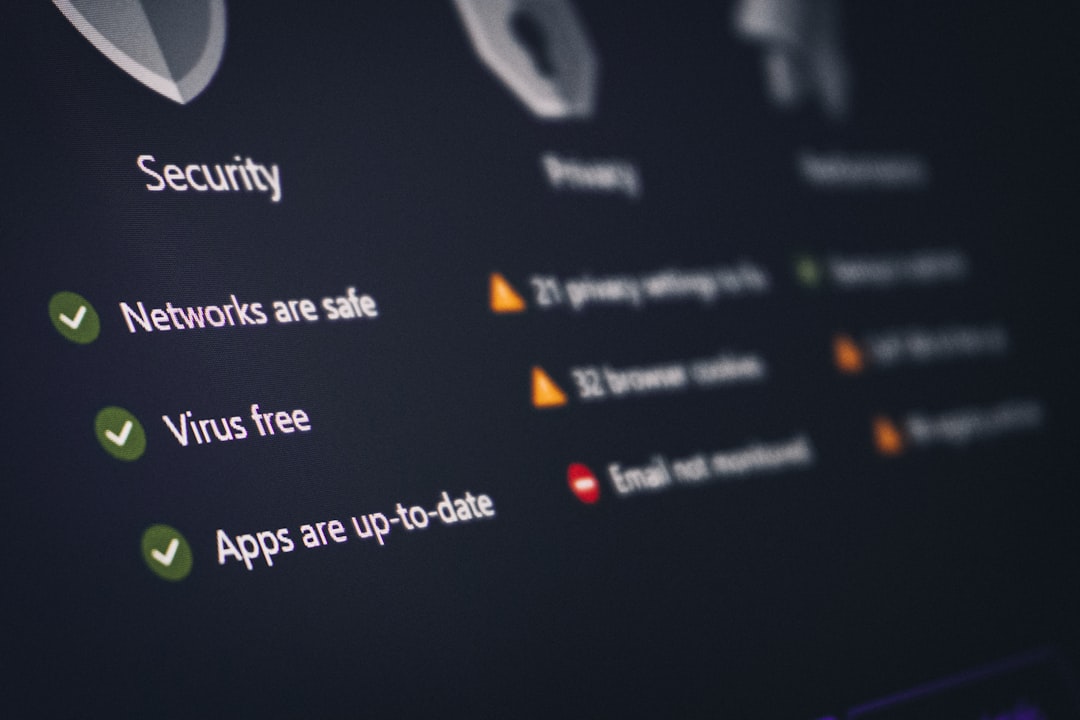

- Security and compliance frameworks

Seamless integration avoids fragmented reporting. It ensures that every step of a hybrid process, from AI input to human approval, is recorded and measurable.

Image not found in postmeta

Key Metrics for Evaluating Hybrid Workflow Success

Organizations tracking hybrid performance often rely on a core set of measurable indicators:

Efficiency Metrics

- Cycle time reduction percentage

- Automation coverage rate

- Throughput increase

Quality Metrics

- Error reduction rates

- Customer satisfaction scores

- Human override ratios

Adoption Metrics

- Active user participation

- Feature usage frequency

- Training completion rates

Risk Metrics

- Bias detection incidents

- Compliance violations

- Security breach attempts

By combining these indicators, organizations obtain a holistic perspective of system health.

Challenges in Tracking Hybrid Performance

Despite technological advancements, hybrid monitoring presents unique challenges.

Attribution Complexity

It can be difficult to determine whether success stems from human effort, AI capability, or their combination. Performance tools must therefore enable detailed activity breakdowns to clarify contributions.

Data Privacy Concerns

Monitoring both human behavior and AI processes generates large volumes of sensitive data. Effective tools must include encryption, access controls, and compliance mechanisms to protect information.

Over-Monitoring Risks

Excessive tracking may reduce morale. Organizations must strike a balance between performance visibility and employee autonomy.

Best Practices for Selecting Performance Tracking Tools

When evaluating options, leaders should prioritize platforms that:

- Provide unified reporting across human and AI activities

- Offer customizable metrics aligned with business objectives

- Include explainability and compliance features

- Support real-time alerts and notifications

- Scale as AI systems grow more complex

It is also essential to conduct pilot testing before full-scale implementation. Testing ensures metrics are meaningful and do not introduce unnecessary complexity.

The Future of Hybrid Performance Monitoring

As AI systems become more autonomous, performance tracking tools will likely incorporate predictive analytics. These advanced systems may forecast bottlenecks before they occur, recommend workflow adjustments, and automatically retrain models when performance dips.

Additionally, ethical oversight features will expand. Tools may include automated bias scanning, fairness scoring, and transparent documentation of decision logic. Human-AI collaboration metrics will evolve beyond productivity to include creativity, innovation, and strategic value generation.

Ultimately, performance tracking in hybrid workflows will move from reactive measurement to proactive optimization.

FAQ

1. Why are traditional productivity tools insufficient for hybrid human-AI workflows?

Traditional tools typically measure only human output. Hybrid workflows require evaluation of both algorithm performance and human interaction with automated systems, including accuracy, efficiency, and quality improvements.

2. What is model drift, and why should it be monitored?

Model drift occurs when AI predictions become less accurate due to changes in data patterns. Monitoring drift ensures systems remain reliable and continue delivering accurate outputs over time.

3. How can organizations measure human contribution in AI-assisted tasks?

They can track edit quality, override frequency, creative input, and decision validation efforts rather than simply counting completed tasks.

4. What role does explainability play in performance tracking?

Explainability logs allow organizations to understand how AI systems produce decisions, ensuring transparency, accountability, and regulatory compliance.

5. Are real-time dashboards necessary?

Real-time dashboards provide immediate insights into system performance, helping organizations address inefficiencies before they escalate.

6. How can companies avoid over-monitoring employees?

By focusing on workflow improvement rather than individual surveillance, setting clear data governance policies, and ensuring transparency about what metrics are tracked.

7. What is the most important metric in hybrid performance tracking?

There is no single universal metric. Success depends on aligning performance indicators with organizational objectives, whether that involves efficiency, quality, compliance, or innovation.