AI tools are powerful. But they can also be expensive. Every prompt, every token, every API call adds up. If you are building with large language models, your bill can grow fast. That is where AI cost optimization platforms like Helicone come in. They help you see what you are spending. And more importantly, they help you control it.

TLDR: AI API costs can spiral out of control if you are not tracking usage closely. Platforms like Helicone, Langfuse, and Portkey help you monitor, analyze, and reduce your spending. They show you detailed logs, token usage, and performance data in real time. With the right insights, you can cut waste, optimize prompts, and build smarter AI apps without breaking the bank.

Let’s break this down in a simple way.

Why AI API Costs Get Out of Control

Most AI providers charge you based on:

- Tokens used (input and output)

- Number of API requests

- Model type (some models are more expensive)

- Fine tuning and storage

At first, costs seem small. A few cents here. A few cents there. But once your app goes live, traffic increases.

Now multiply:

- Thousands of users

- Long conversations

- Large context windows

- Verbose responses

Suddenly your monthly AI bill is scary.

The biggest problem? Many developers do not know where the money is going.

That is like driving a car with no fuel gauge.

What Is an AI Cost Optimization Platform?

An AI cost optimization platform sits between your app and the AI provider.

It tracks:

- Every request

- Every response

- Token counts

- Latency

- Errors

- Costs per user or feature

Think of it as a smart dashboard for your AI usage.

Instead of guessing, you see clear data. In real time.

This helps you:

- Find waste

- Reduce unnecessary tokens

- Switch to cheaper models when possible

- Set limits and alerts

- Understand user behavior

Now let’s talk about one popular option.

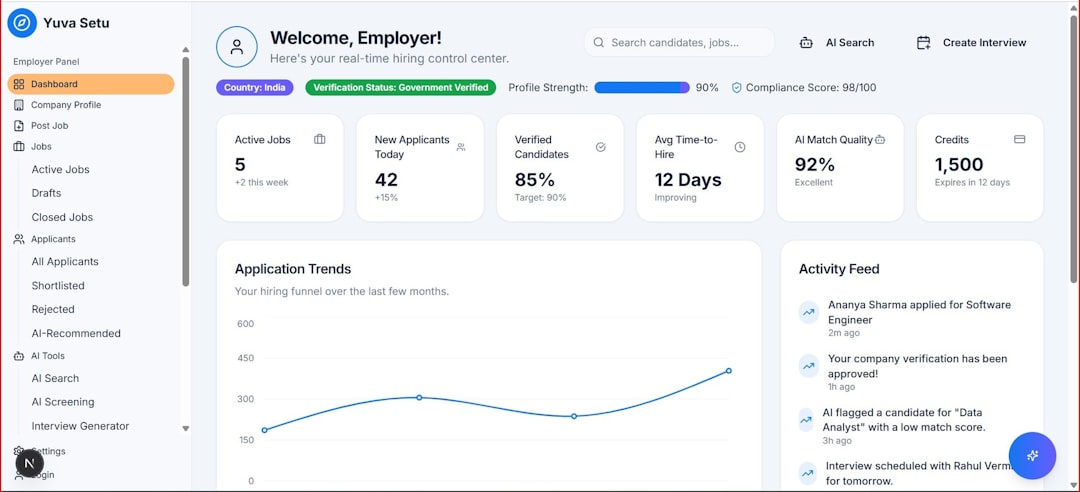

Helicone: A Simple Way to See Everything

Helicone is designed specifically for monitoring LLM usage.

It works as a lightweight proxy. You route your API calls through Helicone. It logs everything automatically.

No complicated setup. No major code changes.

Key features:

- Request and response logging

- Token usage tracking

- Cost breakdown by endpoint

- User level analytics

- Error monitoring

- Prompt inspection

The magic is visibility.

You can see which prompts are too long. Which features are expensive. Which users generate the most cost.

This makes optimization practical.

Example: Without vs With Helicone

Without monitoring:

- You send long prompts

- You allow large max tokens

- You log nothing

- You get a surprise bill

With monitoring:

- You notice responses are 3x longer than needed

- You reduce max tokens

- You clean up prompts

- You cut costs by 30% or more

Small tweaks. Big savings.

Other AI Cost Optimization Tools

Helicone is not alone. There are other platforms that help monitor and optimize AI API costs.

Here are a few popular ones:

1. Langfuse

Langfuse focuses on observability and analytics for LLM applications.

It tracks:

- Traces

- Prompt versions

- Token usage

- Costs

- User sessions

It is strong on debugging and evaluation.

2. Portkey

Portkey acts as an AI gateway.

It adds:

- Routing between models

- Fallback handling

- Cost tracking

- Rate limiting

- Security controls

This is helpful if you use multiple providers.

3. OpenRouter Analytics

If you use OpenRouter, you get built in analytics.

It shows:

- Model wise usage

- Token consumption

- Performance comparison

It is simpler but useful.

Quick Comparison Chart

| Platform | Main Focus | Cost Tracking | Multi Model Support | Best For |

|---|---|---|---|---|

| Helicone | LLM observability | Yes, detailed | Yes | Startups and product teams |

| Langfuse | Tracing and analytics | Yes | Yes | Teams optimizing prompts |

| Portkey | AI gateway | Yes | Strong multi model routing | Enterprises and scaling apps |

| OpenRouter Analytics | Basic usage tracking | Yes | Within platform | Developers using OpenRouter |

How These Tools Actually Reduce Costs

Monitoring is step one. Optimization is step two.

Here is how these platforms help you save real money.

1. Prompt Optimization

Many prompts are too long.

You might be:

- Repeating instructions

- Adding unnecessary context

- Using large system messages

Cost platforms show token counts per request.

Image not found in postmeta

When you see that one prompt uses 2,000 tokens, you rethink it.

Cutting 500 tokens per request can reduce costs dramatically at scale.

2. Response Length Control

Sometimes the model talks too much.

You can:

- Lower max tokens

- Add “be concise” instructions

- Switch to smaller models

Monitoring makes these changes measurable.

3. Model Selection Strategy

Not every task needs the most advanced model.

You can:

- Use cheaper models for simple tasks

- Reserve premium models for complex reasoning

- Route requests intelligently

This alone can cut costs by half.

4. Detecting Abuse and Overuse

If you run a public app, some users will test limits.

They might:

- Send extremely long prompts

- Spam the API

- Generate massive outputs

Cost platforms let you identify heavy users.

You can then:

- Add rate limits

- Set quotas

- Require paid plans

This protects your margins.

5. Real Time Alerts

Imagine getting notified when spending spikes.

That is powerful.

You can set thresholds. When usage crosses a limit, you get an alert.

No more end of month surprises.

What It Looks Like in a Real Workflow

Here is a simple flow:

- User sends a message in your app

- Your backend forwards it through Helicone

- Helicone logs tokens and cost

- The AI provider responds

- The data appears in your dashboard

Now you have full transparency.

You can filter by:

- User ID

- Feature name

- Model

- Date range

This makes product decisions smarter.

Startups Love These Platforms

Why?

Because startups run on tight budgets.

AI is often their biggest variable cost.

If revenue is $20,000 per month but AI costs are $12,000, you have a problem.

By optimizing:

- You improve margins

- You extend runway

- You reduce risk

Investors also like clean, predictable cost structures.

Common Mistakes to Avoid

Even with monitoring tools, teams make errors.

- Ignoring small inefficiencies – small waste scales fast

- Not reviewing dashboards regularly – data unused is useless

- Over engineering too early – optimize based on real data

- Using only the most advanced models – match model to task

The goal is balance.

Quality and cost must work together.

The Future of AI Cost Management

As AI adoption grows, cost management will become standard.

We will see:

- Automated model switching

- Dynamic token compression

- Smarter caching systems

- Built in budget enforcement

Eventually, optimization will be automatic.

But for now, visibility is king.

Final Thoughts

Building with AI is exciting. The possibilities feel endless.

But costs are real.

If you do not track them, they will surprise you.

Platforms like Helicone give you clarity. Others like Langfuse and Portkey add flexibility and routing power.

The formula is simple:

- Track everything

- Measure token usage

- Optimize prompts

- Choose the right model

- Set limits and alerts

Do this, and your AI app becomes more sustainable.

Smarter engineering. Healthier margins.

And no more heart attacks when the cloud bill arrives.