Imagine you trained a powerful AI model. It detects objects. It translates languages. It writes text. But when you try to run it in the real world, it feels… slow. Like a sports car stuck in traffic. That is where inference optimization engines come in. They turn your trained model into a speed machine.

TLDR: Inference optimization engines like TensorRT help AI models run faster and more efficiently after training. They shrink, tune, and rewire models for real-world use. This leads to lower latency, smaller memory usage, and better hardware performance. If you care about speed and scale, these tools are your best friend.

Let’s break it all down in a fun and simple way.

What Is Inference, Really?

Machine learning has two big phases:

- Training – The model learns from data.

- Inference – The model makes predictions.

Training is like studying for exams. It takes time. It needs lots of compute power.

Inference is taking the exam. It must be fast. Especially in the real world.

Imagine:

- Self-driving cars deciding in milliseconds.

- Voice assistants responding instantly.

- Fraud detection systems blocking payments in real time.

If inference is slow, the product feels broken.

This is where optimization engines step in.

What Is an Inference Optimization Engine?

An inference optimization engine is software that:

- Takes a trained model

- Rewrites and optimizes it

- Deploys it to run efficiently on specific hardware

Think of it like a mechanic tuning an engine.

The model already works. But it can work better.

Tools like TensorRT, ONNX Runtime, and OpenVINO remove bottlenecks. They reduce memory usage. They fuse operations. They lower precision safely.

The result?

- Faster predictions

- Less hardware cost

- Lower power consumption

- Better scalability

Meet TensorRT: The Speed Specialist

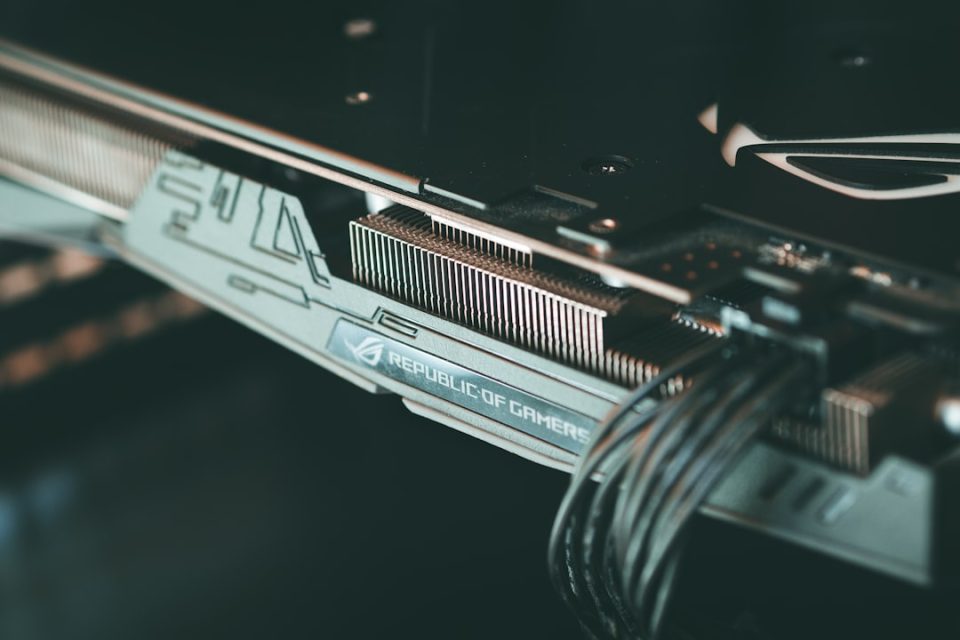

TensorRT is an inference optimization engine built by NVIDIA. It is designed for GPUs.

If your AI runs on an NVIDIA GPU, TensorRT is like giving it turbo boost.

Here’s what TensorRT does:

- Layer fusion – Combines multiple neural network layers into one.

- Precision calibration – Converts models from FP32 to FP16 or INT8.

- Kernel auto-tuning – Finds the fastest way to run operations.

- Memory optimization – Reduces memory footprint smartly.

Imagine you trained a model in PyTorch.

Normally, it runs through many separate operations. Each one takes time. Each one allocates memory.

TensorRT says:

“Why not combine steps? Why not streamline this? Why not use lower precision if accuracy stays good?”

And suddenly, your model runs 2x, 5x, sometimes even 10x faster.

Why Speed Matters So Much

Let’s talk about latency.

Latency is how long it takes to get a prediction.

For some systems, a delay of 100 milliseconds is fine.

For others, 10 milliseconds is too slow.

Consider:

- Autonomous driving – Decisions must be instant.

- High-frequency trading – Microseconds can mean money.

- AR and VR – Lag breaks immersion.

Optimization engines reduce latency dramatically.

They also improve throughput. That means handling more predictions per second.

This is crucial for cloud APIs serving millions of users.

Core Techniques Used in Optimization

Let’s simplify some of the magic happening behind the scenes.

1. Precision Reduction

Models are often trained in 32-bit floating point (FP32).

But during inference, you may not need that much precision.

Optimization engines convert models to:

- FP16 (half precision)

- INT8 (8-bit integers)

This makes calculations much faster.

And uses less memory.

If accuracy stays within acceptable limits, it is a big win.

2. Layer Fusion

Neural networks have many layers.

Sometimes operations can be combined.

Instead of:

- Layer A runs

- Memory write

- Layer B runs

- Memory write

You get:

- Fused Layer AB runs once

Less memory movement. Less overhead. More speed.

3. Kernel Tuning

GPUs execute small programs called kernels.

TensorRT benchmarks different implementations.

It picks the fastest one for your exact hardware.

It is custom tailoring for silicon.

TensorRT vs Other Optimization Engines

TensorRT is powerful. But it is not alone.

Here is a simple comparison:

| Tool | Best For | Hardware Focus | Strength |

|---|---|---|---|

| TensorRT | High performance GPU inference | NVIDIA GPUs | Extreme speed and GPU optimization |

| ONNX Runtime | Cross platform deployment | CPU, GPU, diverse accelerators | Flexibility and portability |

| OpenVINO | Edge and Intel devices | Intel CPU, GPU, VPU | Strong edge device optimization |

| TFLite | Mobile deployment | Mobile CPU, Edge TPU | Lightweight for smartphones |

If you live in the NVIDIA ecosystem, TensorRT shines.

If you need cross-platform simplicity, ONNX Runtime might be easier.

Each engine has its sweet spot.

Real-World Example: From Slow to Lightning Fast

Let’s say you built an image classification model.

Raw PyTorch inference time: 40 milliseconds per image.

You convert it using TensorRT with FP16 precision.

New inference time: 12 milliseconds.

Switch to INT8 calibration.

Now: 7 milliseconds.

Accuracy drops by only 0.5%.

That is often acceptable.

Now imagine serving 10,000 requests per second.

The cost savings in cloud GPU usage are massive.

Edge AI and Power Efficiency

Optimization is not just about speed.

It is also about power.

Edge devices have strict limitations:

- Drones

- Robots

- Security cameras

- Smart sensors

They cannot run giant models at full precision.

Optimization engines shrink and compress models.

They reduce:

- Memory usage

- Battery drain

- Thermal heat output

This makes AI possible in tiny hardware.

How the Workflow Typically Looks

Here is a simple pipeline:

- Train model in PyTorch or TensorFlow.

- Export model to ONNX format.

- Feed model into TensorRT.

- Apply precision calibration and optimization.

- Deploy optimized engine to production.

Once deployed, the optimized engine runs independently.

No unnecessary training components.

No extra overhead.

Just pure inference power.

Challenges to Keep in Mind

Optimization is powerful. But not magic.

There are trade-offs.

- Lower precision can slightly reduce accuracy.

- Hardware-specific engines reduce portability.

- Debugging optimized models can be harder.

You must test carefully.

You must validate output.

Production AI needs trust.

The Future of Inference Optimization

Models are getting bigger.

Think large language models. Vision transformers. Multimodal giants.

Without optimization, deployment cost would explode.

The future includes:

- Automatic quantization

- Smarter compiler-level graph optimization

- Hardware-aware AI architectures

- Specialized AI inference chips

Inference engines are becoming more like compilers.

You write a model once.

The engine figures out the fastest way to run it anywhere.

Why You Should Care

If you are:

- An ML engineer

- A startup founder

- A product manager building AI features

- A developer deploying models to production

You cannot ignore inference optimization.

Training makes your model smart.

Optimization makes it usable.

It saves money.

It reduces latency.

It improves user experience.

It unlocks edge deployment.

It scales globally.

Final Thoughts

Inference optimization engines like TensorRT are the unsung heroes of AI.

They do not create smarter models.

They create faster ones.

And in the real world, speed is everything.

Without optimization, your AI is a lab experiment.

With optimization, it becomes a product.

So next time your model feels slow, do not train a bigger one.

Tune it. Compress it. Optimize it.

Because sometimes, the biggest breakthrough is not smarter AI.

It is faster AI.